The 2012 paper in one paragraph

In 2012, Emily Falk, Elliot Berkman, and Matthew Lieberman published "From neural responses to population behavior: neural focus group predicts population-level media effects" in Psychological Science[1]. Thirty-one smokers watched three anti-smoking campaigns inside an fMRI scanner. The researchers measured medial prefrontal cortex response to each campaign. After the campaigns aired nationally, Falk's team compared the per-campaign neural signal to the per-campaign call volume at 1-800-QUIT-NOW. The neural signal predicted the call volume. Self-report of campaign effectiveness did not.

That one result changed how a generation of researchers thought about neuroforecasting. Fifteen years later, it has held up and extended.

Thirty-one scanned smokers predicted national call volume. Self-report from the same thirty-one did not. That asymmetry is the whole argument for neuroforecasting.

The argument that makes it work

Aggregate behavior averages out individual-level noise. A small neural sample, correctly analyzed, can predict population averages even when individual prediction is modest. This is the same mathematical fact that lets a tightly-sampled weather station inform a regional forecast.

What the neural signal contributes, beyond self-report, is information that does not survive verbal introspection. The mPFC is a value-integration hub. It reflects how motivational salience gets combined across features, which is not what a participant says when they are asked whether they liked an ad. Self-report filters through social desirability, post-hoc rationalization, and verbal-fluency asymmetries. The brain signal does not.

What replicated

Falk 2010. An earlier paper in Journal of Neuroscience[2] showed mPFC activity in 28 smokers predicted individual reduction in cigarette consumption at one-month follow-up, at r ≈ 0.49. This set up the population-level claim.

Scholz, Baek, O'Donnell, Kim, Cappella, Falk 2017. PNAS[3] extended the paradigm to NYT health articles. mPFC plus value-system activity in roughly 40 scanner subjects predicted which articles went viral among millions of readers.

Falk et al. 2016. SCAN[4]. mPFC and precuneus predicted email click-through rates for health messages.

Berns and Moore 2012. JCP[5]. Nucleus accumbens response in teens predicted subsequent song popularity better than self-report. Roughly 30 percent of eventual sales variance explained three years out, with a small scanner sample.

The pattern is consistent across paradigms. A small neural sample in the mPFC or value-system regions, combined with an aggregate outcome measure, predicts what the self-report from the same sample does not.

What the critics pushed back on

The legitimate critiques focus on small stimulus-set sizes (three campaigns in Falk 2012, for example), the risk of multiple-comparison errors, and the reverse-inference framing that sometimes crept into popular coverage even when Falk's own papers did not make the naive claim. The Poldrack critique[6] applies to any study that reads cognitive states off regional activations, though Falk's method is forward-predictive and largely sidesteps it (see reverse inference twenty years on).

The pushback that did not hold up was the framing that neuroforecasting was a fad. The method has replicated across labs, paradigms, and outcome measures for fifteen years.

What changed with foundation models

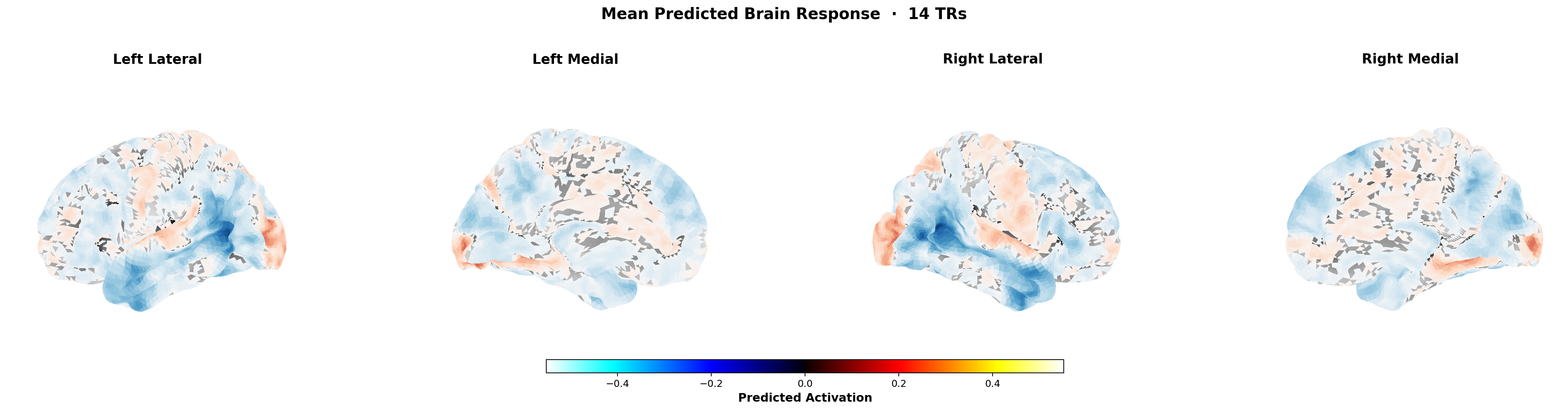

Forward encoding models (TRIBE v2 (see TRIBE v2 explained), MindEye, Huth Lab semantic atlas) now predict cortical response in-silico from new stimuli, using knowledge distilled from thousands of hours of public fMRI. The expensive substrate, scanning a new sample for every study, is removed.

The Falk paradigm used to require an IRB, a scanner, thirty participants, and a six-month protocol. In 2026 it runs on a server in seconds. The prediction is noisier than a well-run scan, and the directional answer is roughly the same.

What has not changed

The interpretive framework is still valuable. The mPFC-as-value-integration story. The population-average reasoning that makes small-n neural data forecast large-n outcomes. The humility that the method is directional, not deterministic; moderate-effect, not magic.

A new generation of commercial products claims neural prediction without grounding in the Falk tradition. Those products often reinvent problems that Falk, Berkman, Lieberman, Scholz, and Cappella already worked out. Reading the actual papers before buying the subscription is a cheap diligence step that saves serious money.

Implications for 2026 applied work

The Falk-style neural signal is one of four we integrate (see the four signals framework). Alone, it is directional. Fused with linguistic, cultural, and historical signals, and calibrated against real outcomes, it becomes useful infrastructure. That is the project.

Emily Falk's lab is still active at Penn. The field has extended her work to social media sharing, food choice, exercise interventions, and public health. Fifteen years in, the original claim is better supported than it was the day Psychological Science published the paper.

References

- 1Falk, Berkman, Lieberman. From neural responses to population behavior. Psychological Science 2012.

- 2Falk, Berkman, Mann, Harrison, Lieberman. Predicting persuasion-induced behavior change. J Neurosci 2010.

- 3Scholz et al. PNAS 2017.

- 4Falk et al. Brain predictors of email clickthrough. SCAN 2016.

- 5Berns and Moore. JCP 2012.

- 6Poldrack. Can cognitive processes be inferred from neuroimaging data? TICS 2006.

- 7Communication Neuroscience Lab, Annenberg School, UPenn.

- 8Meta AI. TRIBE v2.