In 1922, a British mathematician named Lewis Fry Richardson tried to predict the weather by hand. It took him six weeks. The forecast was wrong. A hundred years later, the five-day forecast is more accurate than the two-day forecast was in 1980. The story of how that happened is the same story that applies to predicting human response to content.

The shape of every successful prediction system

Every prediction system that has worked in the real world has the same shape: signal integration, aggregation, updating, and honest uncertainty. Weather. Public health. Stock indices. Flu. Elections. Chess. Protein folding. Nothing predicts the future from a single sensor. Everything that predicts anything combines multiple instruments and publishes calibrated error bars.

Predicting human response to content is the same kind of problem. It will be solved the same way. Aggregating neural, linguistic, cultural, and historical signals (see the four signals framework), with honest uncertainty, is not a bold bet. It is the engineering default every adjacent field has already converged on.

The ambitious part is applying signal integration to culture, which is newer than weather. The shape of the solution is not in doubt.

The weather story in detail

Richardson's 1922 manual forecast failed because his single-model approach could not absorb observations fast enough to correct itself. The math was right. The integration was wrong.

The breakthroughs came in 1950 and 1980. In 1950, Charney, Fjortoft, and von Neumann ran the first successful numerical weather prediction on the ENIAC[1]: 24 hours of compute for a 24-hour forecast. The point was not speed. The point was that a single consistent physical model could be numerically integrated over a grid. In 1963, Edward Lorenz's "Deterministic Nonperiodic Flow"[2] discovered chaos theory and the butterfly effect, setting the fundamental limits of deterministic forecasting. The field learned to stop chasing one-shot accuracy and started thinking in ensembles.

In the 1980s, ECMWF built ensemble forecasting with data assimilation: not one simulation, but many, fused against streaming observations. Bauer, Thorpe, and Brunet's 2015 Nature review[3] called this the "quiet revolution of numerical weather prediction." Forecast skill improved by roughly one day per decade through integration and assimilation, not through model size.

Other domains that moved from magic to infrastructure

Chess. Deep Blue 1997 beat Garry Kasparov not because the program was smart in a new way, but because search and evaluation, integrated well, overwhelmed the human.

Jeopardy. IBM Watson's DeepQA 2011[4] was an explicit signal-integration architecture. Hundreds of components producing candidate answers and confidence scores, fused by a meta-classifier.

Go. AlphaGo 2016[5]: value network plus policy network plus Monte Carlo tree search. No single component was sufficient. The fusion was.

Protein folding. AlphaFold 2[6] and AlphaFold 3 combined evolutionary information, structural priors, and chemistry into a single predictive system. The biology field had resisted this combination for decades. Once fused, the problem collapsed.

Infectious disease. The CDC's FluView and FluSight ensemble[7], the US COVID-19 Forecast Hub[8], and similar systems fuse multiple epidemiological models. No model wins alone. The ensemble wins.

Elections. Nate Silver's 538 model aggregates polls, fundamentals, and economic indicators. The meta-claim is the same: signal integration.

The humility cases

Not everything is tractable to the same degree. Individual stock-market returns are not predictable in a commercially exploitable way; the SPIVA scorecards[9] show 80+ percent of active managers underperform their benchmark over ten years. Long-horizon individual-level behavior prediction is similarly difficult.

We do not claim content prediction is easier than finance. We claim it is at least as tractable as long-range weather. Short-horizon aggregate-level prediction of how content will perform, once calibrated against real outcomes, is a problem with a signal-integration solution.

Prediction markets (Iowa Electronic Markets since 1988, Polymarket reportedly over $3.6B in 2024 election cycle volume, Kalshi under CFTC regulation) are another example of aggregation working. Markets are also signal integration systems.

What is new for cultural prediction

Three things changed in the last two years that were never true before.

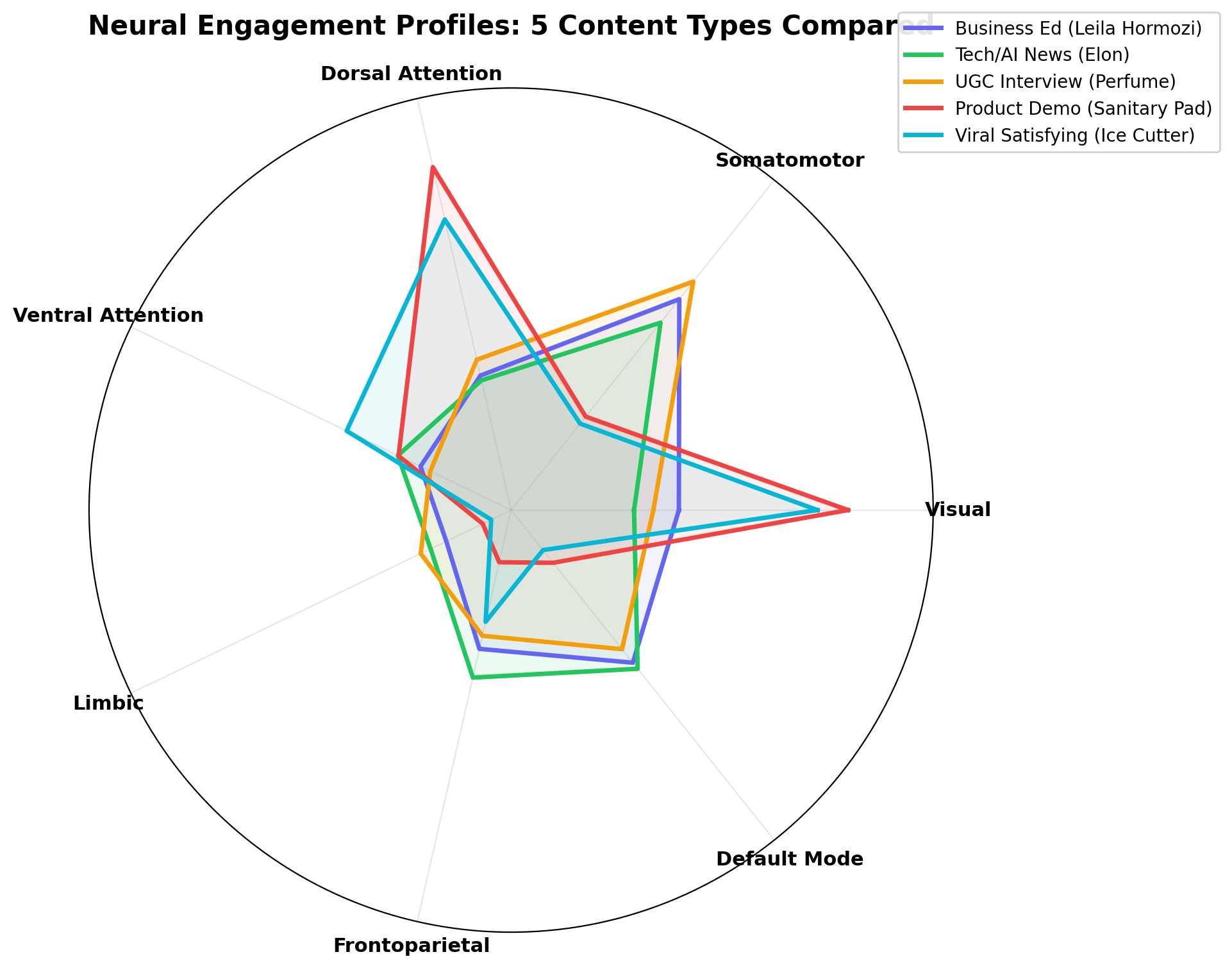

- Open forward neural encoding. TRIBE v2 (see TRIBE v2 explained) makes predicting cortical response to unreleased content a commodity operation.

- LLM-based linguistic and cultural modeling. Arousal, persuasion density, emotional arc, live zeitgeist proximity can be scored at near-zero marginal cost.

- Public ad archives. The Meta Ad Library (see the Meta Ad Library guide) plus EU DSA expansion provides the largest open historical corpus in advertising history.

All four signal families (neural, linguistic, cultural, historical) are accessible at commodity cost for the first time. The limiting factor has shifted from data to integration.

Climate models as the analogy

Climate models are probabilistic, not deterministic. They output ranges, not numbers. "80 percent chance of rain" is the right kind of output for a weather forecast, and the right kind of output for a content forecast. Vendors who claim single-point predictions with no uncertainty bands are selling oracles. Oracles fail.

Infrastructure that integrates signals and publishes probabilistic outputs with honest error bars is the durable position. That is what every mature forecasting field looks like. That is what cultural prediction will look like in 2030.

What this implies for our industry

Vendors selling point predictions with no uncertainty bands will lose. Not because of a failed PR cycle. Because the buyers worth winning, the ones doing hundreds of millions in annual media, want calibration and will increasingly demand it.

The companies that publish their calibration (see why we publish), define their failure modes, and treat uncertainty as a first-class output will compound their trust. The companies that keep promising oracles will be absorbed into consolidated panel houses the way legacy neuromarketing vendors were absorbed into NielsenIQ (see neuromarketing in 2026).

What OpenAffect is building

Signal integration infrastructure. Four signal families. Calibration in public. Probabilistic outputs. No oracles.

Predicting human response to content is a hard problem. It is also not a new kind of problem. We are late adopters of a discipline that weather, medicine, and protein folding already solved. Catching up is not glamorous. It is where the work is.

References

- 1Charney, Fjortoft, von Neumann. Numerical integration of the barotropic vorticity equation. Tellus 1950.

- 2Lorenz. Deterministic nonperiodic flow. J Atmos Sci 1963.

- 3Bauer, Thorpe, Brunet. The quiet revolution of numerical weather prediction. Nature 2015.

- 4Ferrucci et al. Building Watson. AI Magazine 2010.

- 5Silver et al. Mastering the game of Go. Nature 2016.

- 6Jumper et al. AlphaFold. Nature 2021.

- 7Reich et al. Accuracy of real-time flu forecasting. PNAS 2019.

- 8Cramer et al. US COVID-19 Forecast Hub. Scientific Data 2022.

- 9SPIVA scorecards.

- 10Iowa Electronic Markets.

- 11Hayek. The use of knowledge in society. American Economic Review 1945.

- 12Richardson. Weather Prediction by Numerical Process. Cambridge 1922.